You can get more details here:

|

Voice editing has reached podcast production software this September with @DescriptApp. It makes the process of creating "podcasts, videos, and transcripts" as "easy as typing". Based on Lyrebird technology, acquired in 2019, the applied use-cases goes beyond podcast edition, being also in meeting notes and multitrack editing. Check their video, the results are impressive! You can get more details here:

0 Comments

An interesting interview from the Voicebot Podcast on voice apps which are not directly related to voice assistants. Here is the list:

1. Neurodegenerative diseases detection and tracking 2. Submarine smart speakers 3. Accent reduction applied to call centers 4. Specialized ASR for identifying non-standard speech 5. Language learning assistant (and as an automated way to evaluate a job candidate language knowledge) 6. Voice assistant for agriculture 7. Alternative microphones that can identfy speech without hearing your vocalization 8. Animal audio analytics (e.g. to detect diseases) 9. Air traffic control talking to drones 10. Smart Baby Monitor to track linguistic development over the first 1-2 years and detect possible issues/delays

It's been quite a while since my last post. Since I have been listening to a wide variety of podcasts related to machine learning and speech technologies, both from a technical and business perspectives, I've decided to post the interesting ones that I come accross.

While I finish re-listening to it in order to take some notes and make a nice summary, here you have this podcast from the "The Voice Tech Podcast" on "The Emotion Machine". In other words, how is Behavioural Signals adding speech emotion detection in call centers technologies, among others.

During the last months some IT companies have shown interest in using speech recognition and translation technologies, which may bring a significant added value to their products.

We will soon be able to use speech recognition in Facebook as a quicker way to have text previews of the voice clips after the company bought Wit.ai. In the context of Skype, speech technologies are used to speak to people with which we do not share a common language in the following way. First, transcriptions of the spoken utterances are obtained with a deep learning approach applied to speech recognition. Then, the recognized text is translated to another language using natural language processing techniques, and finally this text is synthesized into audible speech. Reviews on this new feature have already been published, pointing out both the limitations (accurate translated words, when to take the turn to break in and translate) and achievements (some language barriers starts to be broken down, solutions to correct recognized text before synthesis). Similarly, a universal speech translator will be available on Android smartphones as described here and here. Although in real world scenarios these applications will suffer from several difficulties (e.g. ambient noise, large vocabulary used by the speaker, speaker accents or speaking speed), it will be interesting to test how state of the art techniques like deep learning perform for these applications. Next, there are some videos showing some Skype Translator demos. The first video is an introduction, followed by a life demo between English and German, and finally a demo between English and Spanish. Finally, there is a Google translation demo. Skype demosGoogle translation demosThis is an interesting TED Talk on a project that aims to help people who can’t speak by creating synthetic voices which are unique to each user, just like in the real world.

People with voice disorders may be able to handle prosody (e.g. speech rate, pitch, and dynamics) but are not able to produce understandable speech. Based on the source-filter model for speech, this project’s main idea is to combine the user prosody (source) with the voice identity (filter) of a donor. A concatenative text-to-speech (TTS) system is build from units recorded from the donor, and the TTS is controlled by the user’s prosody. Here is one interesting application for acoustically manipulating objects in three dimensions developed by researchers at the University of Tokyo and the Nagoya Institute of Technology.

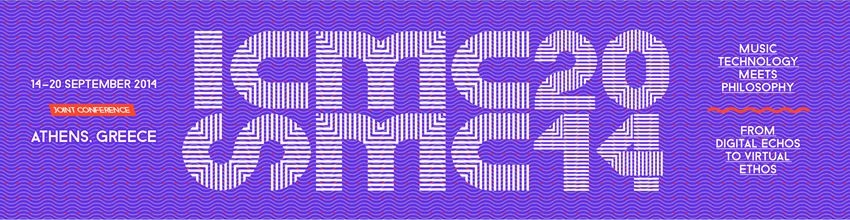

With the tested setup, the ultrasonic standing waves could handle objects with a diameter of 0.6mm, 1mm, and 2mm. Larger particles will be handled in future experiments. The authors consider their method can be applied to manipulate objects in the industry, to create a floating screen, and, even more interestingly, for handling objects under microgravity. The original publication can be found here.  It's been too long since my last post... Last September I was invited to participate in the joint ICMC|SMC|2014 Conference in Athens. More concretely, Johan Sundberg organized a workshop on the Synthesis of Singing Voice which was held on September 18th. Carl Unander-Scharin and Ludvig Elblaus (from the KTH) presented several artistic projects where singers can change their voice with their body gestures in real time. Unfortunately, Tod Machover, head of the Media Lab's Opera of the Future group, could not finally participate. In my case, I presented several approaches on expression control in singing voice synthesis, which were followed by a debate with Johan Sundberg and the audience with the participation of Roger Dannenberg, Anastasia Georgaki, Masataka Goto, and Xavier Serra amongst others. It certainly provided interesting feedback about the concept of expression, the evaluation process, the purposes and applications of the singing voice synthesis, and future challenges. This is the material we used in the presentation at the SMAC poster session this summer. You can find a video showing the differences on expression performance between the default configuration and our framework set up. The poster pdf file is also available. On October 13th, 2013, a promotional video presenting Freesound.org and its functionalities was released. Here it is!  In the context of singing voice synthesis, the generation of the synthesizer controls is a key aspect to obtain expressive performances. In our case, we use a system that selects, transforms and concatenates units of short melodic contours from a recorded database. This paper proposes a systematic procedure for the creation of such database. The aim is to cover relevant style-dependent combinations of features such as note duration, pitch interval and note strength. The higher the percentage of covered combinations is, the less transformed the units will be in order to match a target score. At the same time, it is also important that units are musically meaningful according to the target style. In order to create a style-dependent database, the melodic combinations of features to cover are identiifed, statistically modeled and grouped by similarity. Then, short melodic exercises of four measures are created following a dynamic programming algorithm. The Viterbi cost functions deal with the statistically observed context transitions, harmony, position within the measure and readability. The final systematic score database is formed by the sequence of the obtained melodic exercises. M. Umbert, J. Bonada, M. Blaauw, Systematic Database Creation for Expressive Singing Voice Synthesis Control, 8th ISCA Speech Synthesis Workshop (SSW8), Barcelona, Spain, September 2013. |

Martí UmbertThis will be the place for news, event updates, and other interesting stuff found on the web Archives

September 2019

Categories

All

|

RSS Feed

RSS Feed