On October 13th, 2013, a promotional video presenting Freesound.org and its functionalities was released. Here it is!

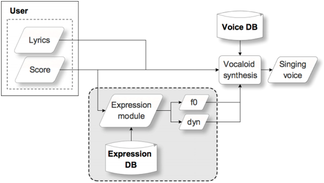

In the context of singing voice synthesis, the generation of the synthesizer controls is a key aspect to obtain expressive performances. In our case, we use a system that selects, transforms and concatenates units of short melodic contours from a recorded database. This paper proposes a systematic procedure for the creation of such database. The aim is to cover relevant style-dependent combinations of features such as note duration, pitch interval and note strength. The higher the percentage of covered combinations is, the less transformed the units will be in order to match a target score. At the same time, it is also important that units are musically meaningful according to the target style. In order to create a style-dependent database, the melodic combinations of features to cover are identiifed, statistically modeled and grouped by similarity. Then, short melodic exercises of four measures are created following a dynamic programming algorithm. The Viterbi cost functions deal with the statistically observed context transitions, harmony, position within the measure and readability. The final systematic score database is formed by the sequence of the obtained melodic exercises. M. Umbert, J. Bonada, M. Blaauw, Systematic Database Creation for Expressive Singing Voice Synthesis Control, 8th ISCA Speech Synthesis Workshop (SSW8), Barcelona, Spain, September 2013.  A common problem of many current singing voice synthesizers is that obtaining a natural-sounding and expressive performance requires a lot of manual user input. This thus becomes a time-consuming and difficult task. In this paper we introduce a unit selection-based approach for the generation of expression parameters that control the synthesizer. Given the notes of a target score, the system is able to automatically generate pitch and dynamics contours. These are derived from a database of singer recordings containing expressive excerpts. In our experiments the database contained a small set of songs belonging to a single singer and style. The basic length of units is set to three consecutive notes or silences, representing a local expression context. To generate the contours, first an optimal sequence of overlapping units is selected according to a minimum cost criteria. Then, these are time scaled and pitch shifted to match the target score. Finally, the overlapping, transformed units are crossfaded to produce the output contours. In the transformation process, special care is taken with respect to the attacks and releases of notes. A parametric model of vibratos is used to allow transformation without affecting vibrato properties such as rate, depth or underlying baseline pitch. The results of a perceptual evaluation show that the proposed approach is comparable to parameters that are manually tuned by expert users and outperforms a baseline system based on heuristic rules. M. Umbert, J. Bonada, M. Blaauw, Generating Singing Voice Expression Contours Based on Unit Selection, Stockholm Music Acoustics Conference (SMAC), Stockholm, Sweden, July 2013. So, today I start publishing this blog!

I'll try to update it from time to time, by keeping track of past, current and future news and events in the context of my PhD on Singing voice expression modelling and UPF's Music Technology Group. Other stuff related to music, speech processing, teaching and research might be posted as well. Further updates are on their way... |

Martí UmbertThis will be the place for news, event updates, and other interesting stuff found on the web Archives

September 2019

Categories

All

|

RSS Feed

RSS Feed